Making AI/ML Models Production Ready With MLOps

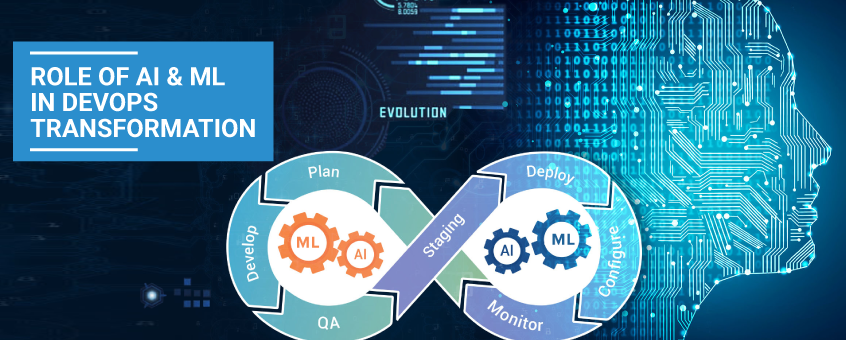

Turning AI and ML experiments into reliable production systems is now a core business need. Because of rapid AI adoption, many organizations struggle to operationalize their models. MLOps offers a practical framework to bridge this gap and bring consistency to model delivery.

In simple terms, it connects data science with engineering practices. As a result, teams can deploy models faster, monitor them continuously, and improve outcomes over time.

Why MLOps Is Essential for AI at Scale

AI systems improve through learning and feedback. However, without proper operational practices, these systems remain fragile. Therefore, many promising initiatives fail after the prototype stage.

With a structured operational approach, organizations can:

- Automate training and deployment workflows

- Track experiments and model versions

- Improve reliability and compliance

- Optimize cloud and GPU usage

- Scale intelligent systems with confidence

At the same time, this approach aligns DevOps, DevSecOps, and DataOps teams, which reduces delivery friction.

From Idea to Production: The MLOps Perspective

Most teams begin with data scientists building and tuning models. Later, those models move to engineering teams for deployment. However, this handoff often creates delays and rework.

Because of this, specialized engineers focus on automating pipelines and managing the full lifecycle. Consequently, models reach users faster and remain stable in production.

Deployment Maturity Levels in MLOps

Early-Stage MLOps Practices

Many teams start by wrapping models in Flask or FastAPI and deploying them with Docker or Kubernetes. This works for testing. However, it does not scale well for production environments.

Custom MLOps Tooling

More mature organizations build internal orchestration systems. While this offers flexibility, it also increases maintenance effort. Over time, these systems become costly to operate.

Platform-Based MLOps Adoption

At the highest maturity level, teams adopt platforms like Kubeflow, Ray, or ClearML. These platforms support the full lifecycle, including training, deployment, and monitoring. As a result, operations become more predictable.

This maturity view aligns with industry guidance from IBM on scalable model serving.

How to Get Started With MLOps

Starting with operational machine learning can feel overwhelming. Fortunately, community-driven stack templates help teams evaluate what they truly need.

A practical setup usually includes:

- Versioned data ingestion

- Experiment tracking

- Automated pipelines

- Model registry and lineage

- Inference monitoring

Not every project needs every component. Therefore, selecting tools based on business goals is critical.

The MLOps Lifecycle in Practice

A typical lifecycle follows clear steps:

- Data collection and validation

- Model experimentation and training

- Pipeline automation

- Model approval and registration

- Deployment and inference

- Monitoring and retraining

Tools such as MLflow, Kubeflow, and Ray often support these stages. Platforms like Neptune.ai also help visualize how the ecosystem fits together across the lifecycle.

Selecting the Right MLOps Tools

Cloud-Based Services

Public cloud providers offer managed services like Amazon SageMaker, Google Vertex AI, and Azure ML. These services reduce setup time. However, they may limit flexibility and increase costs, especially for GPU usage.

Google outlines best practices for this approach here:

https://cloud.google.com/architecture/mlops-continuous-delivery-and-automation-pipelines-in-machine-learning

Kubernetes-Centered Stacks

Some teams prefer building platforms on Kubernetes using open-source tools. This approach provides control and portability. However, it requires strong platform engineering skills.

Commercial MLOps Platforms

Commercial solutions such as ClearML, Weights & Biases, and TrueFoundry reduce operational overhead. Even so, Kubernetes often remains the foundation for deployment.

Common Challenges in MLOps Adoption

Even experienced teams face recurring issues:

- Data privacy and security gaps

- Shortage of skilled engineers

- Tools without proper RBAC or authentication

- Poor platform design leading to inefficiency

- Rising cloud and GPU costs

- Unstable Kubernetes infrastructure

Because of these risks, expert guidance becomes valuable early on.

How ZippyOPS Supports Production-Ready AI

ZippyOPS helps organizations design and operate scalable platforms for machine learning workloads. Instead of isolated tools, ZippyOPS focuses on long-term operational success.

ZippyOPS provides consulting, implementation, and managed services across:

- DevOps, DevSecOps, and DataOps

- Cloud and Infrastructure

- Automated Ops, AIOps, and MLOps workflows

- Microservices and Security

This approach enables faster releases, stronger governance, and predictable costs.

Learn more about ZippyOPS offerings:

https://zippyops.com/services/

https://zippyops.com/solutions/

https://zippyops.com/products/

For demos and technical insights, visit:

https://www.youtube.com/@zippyops8329

Conclusion

Operationalizing machine learning requires discipline, automation, and the right platform choices. When done correctly, it improves speed, reliability, and cost control.

In summary, a strong foundation helps organizations turn AI ideas into dependable products. With expert support, teams can scale intelligence with confidence.

For guidance on strategy or implementation, contact sales@zippyops.com.